How To Train Multimodal LLMs To Understand And Interact With Text, Image, Video And Audio: Model Evaluation: Dataset Construction

If you missed out on the previous article of this series, please click the link In our previous articles, we gave an overall concise introduction to the world of multimodal Large Language Models (LLMs), an overview of their background, methods, how to train them and model evaluation.

- How To Train Multimodal LLMs To Understand And Interact With Text, Image, Video And Audio

- How To Train Multimodal LLMs To Understand And Interact With Text, Image, Video And Audio: Model…

- How To Train Multimodal LLMs To Understand And Interact With Text, Image, Video And Audio: Model…

- How To Train Multimodal LLMs To Understand And Interact With Text, Image, Video And Audio: Model…

Dataset Construction

The collection of multimodal instruction-following data is a key to train multi-modal language models. The collection methods can be broadly categorized into open-source dataset adaptation and self-instruction. We illustrate these two methods sequentially.

1. Open-source dataset

Open-source datasets are valuable repositories of high-quality, structured data that are carefully curated and organized for the advancement of various fields of computer vision.

Over the years, researchers and data scientists have recognized the unparalleled wealth of information saved within these datasets, leading to numerous studies and experiments that draw upon them.

For training M-LLM, one particular area of interest has been the utilization of these datasets, i.e. image captioning[4] and Visual Question Answering (VQA)[5] datasets to construct datasets formatted in the instruction format.

Delving deeper into the specifics, consider the transformation process of VQA datasets.

Originally, the data samples in VQA datasets are presented as input-output pairs. In these pairs, the “input” portion consists of an image, accompanied by a natural language question related to the visual content of the image.

The “output”, on the other hand, is the textual answer, which is conditioned on the image and serves as a response to the posed question. This unique structure naturally lends itself to potential modifications and adaptations to instruction tuning for multi-modal LLMs.

Specifically, the input-output pairs can seamlessly form the multimodal input and response, closely resembling the instructional samples, an example of which can be visualized in Figure 6.

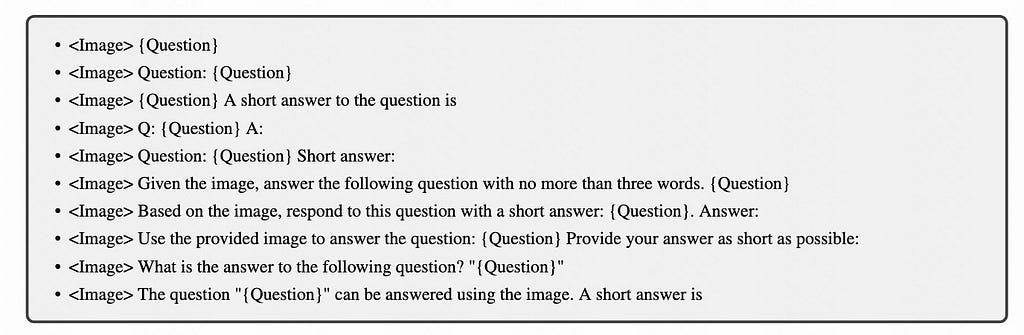

Transitioning to the task of creating the instructions, which essentially act as detailed task descriptors, there are a couple of noteworthy methods.

One approach is to manually design these instructions, which involves human experts hand-crafting various potential instructions. These are then sampled and integrated into the training process.

To illustrate this, consider the instruction templates created specifically for the VQA datasets, a detailed version of which is presented in Figure 6.

In order to ensure a robust and diverse set of instructional tuning data while taking into account their accessibility, researchers have compiled an extensive range of publicly available vision-language datasets, meticulously shaping them into the appropriate instruction tuning format.

The sota work InstructBLIP[6], as illustrated in Figure 7, utilized a comprehensive array of 11 distinct task categories, drawing from a vast repository of 26 diverse datasets.

These encompass various domains, including but not limited to image captioning image captioning integrated with reading comprehension, visual reasoning image question answering, knowledge-grounded image question answering image question answering coupled with reading comprehension, image question generation (derived from existing QA datasets), video question answering, interactive visual conversational question answering, image classification, and the innovative LLaVA-Instruct-150K dataset[7].

However, as technological advancements surge ahead, semi-automatic methods, particularly those leveraging the capabilities of advanced language models like GPT, are gaining attention.

Some research endeavors involve manually crafting a few foundational or “seed” instructions. These seed instructions then serve as prompts for GPT, which in turn generates a broader and more diverse set of instructions.

One of the advantages of utilizing advanced language models like GPT in instruction creation is the possibility of producing a more extensive set of varied and nuanced instructions than a human expert might conceive.

This ensures not only diversity in the data but also increased comprehensibility and robustness in the models trained on them.

The GPT-assisted creation process offers a balance between manual expertise and automated vastness, aiming to maximize the quality and depth of the instructional data.

For instance, an initial seed instruction crafted manually might read, “Based on the visual elements present in the image, determine the logical relationship and reason the given scenario.”

Feeding this into GPT, the model might generate diverse iterations such as “Evaluate the image and identify the logical connections among its elements,” or “Examine the visual content and deduce the underlying relationships.”

This enables a broader spectrum of training data which in turn trains models to be more adaptable and efficient.

2. Self-instruct dataset and mixed dataset

Although open-source datasets are information-rich, they often don’t fully capture real-world scenarios.

For instance, they might miss out on the multifaceted and ever-evolving nature of human interactions, which can encompass multiple phases and topic transitions. To solve this problem, the research community is increasingly embracing a method known as “self-instruction.”

In essence, self-instruction involves using Large Language Models (LLMs) like ChatGPT and GPT-4 to generate new datasets. It starts with a collection of handcrafted “seed examples.” Building on these foundational samples, the models produce a diverse array of samples.

LLaVA [7] innovated this by translating visual data into text and feeding this multimodal content to GPT-4, birthing the LLaVA-Instruct-150k dataset.

Such pioneering work has inspired other initiatives, such as MiniGPT-4 [8], ChatBridge [9], GPT4Tools [10], and DetGPT [11], each customizing the self-instruction methodology to suit their objectives.

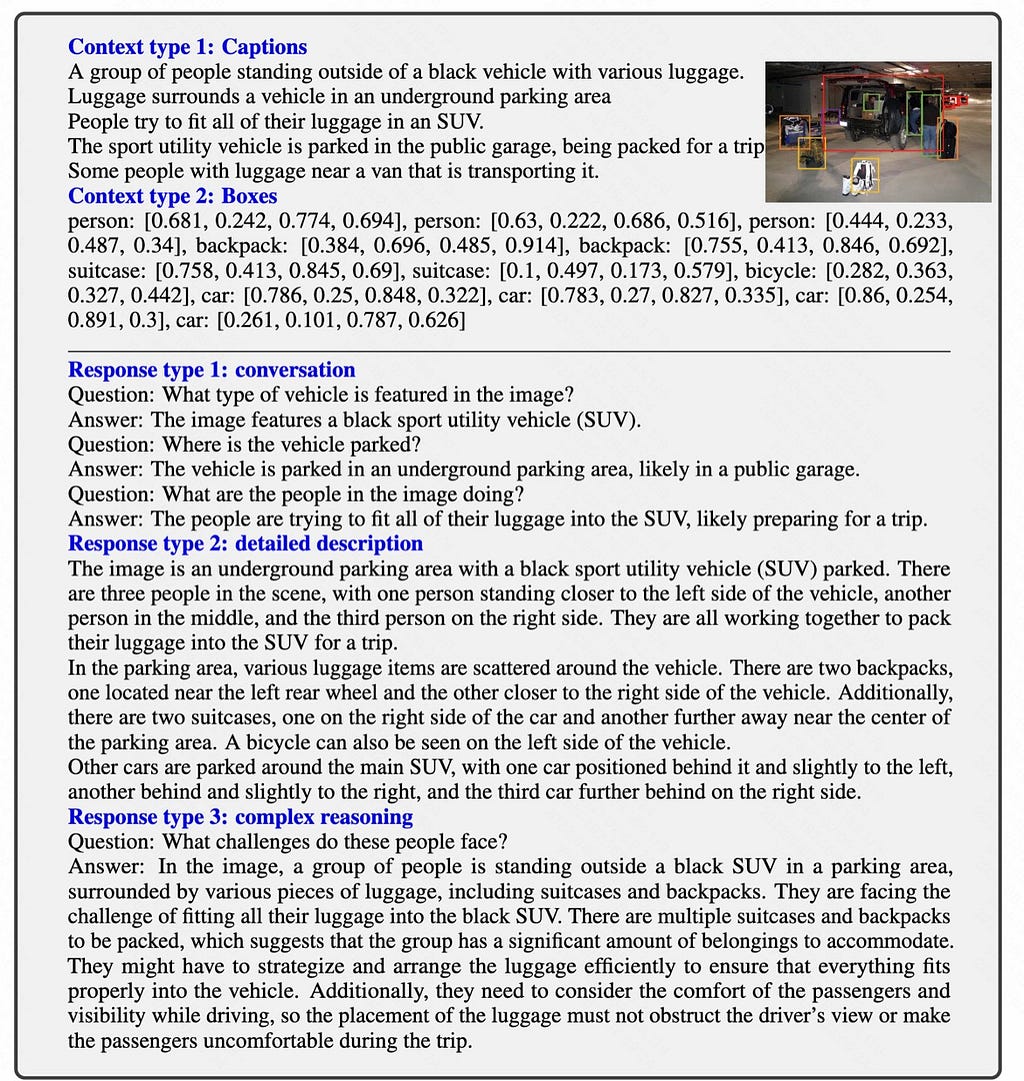

Specifically, the researchers of LLaVA first transform images into text sequences recognizable by LLMs.

For golden-like COCO images [4], they crafted three unique sets of instruction-following data. Exemplary samples of each kind can be found in Figure 9 bottom section.

For each category, an initial batch of manually curated examples was developed. These samples, the only human annotations in the data collection phase, served as the seeds for in-context learning with GPT-4.

Below are the three types:

Conversation: The researchers envisioned a dialogue between an assistant and a person inquiring about a particular photograph. The assistant responds as if directly observing the image, addressing the posed questions. A diverse array of questions concentrated on the image’s visual components. These covered topics like object identification, enumeration, actions, placements, and relative positions. Crucially, only questions with unambiguous answers were included.

Detailed Description: To offer a comprehensive image description, the researchers devised a series of questions. After querying GPT-4, they honed this list. For every image, a randomly chosen question from this list guided GPT-4 in crafting a detailed narrative.

Complex Reasoning: Going beyond mere visual content, they also introduced questions demanding complex reasoning. Answers to such questions typically required a systematic reasoning approach rooted in robust logic.

On another front, the “Hybrid Composition” method is also useful when constructing instruction-tuning datasets. Instead of solely leveraging the multi-modal instruction data, researchers recognize the merit of traditional text-only conversational data in honing a model’s dialogic and instruction-following capabilities.

A standout instance is LaVIN[12], which fuses conventional text-only data with cutting-edge Multi-modal instruction data.

MultiInstruct [13] delves deeper, investigating optimal ways to harmonize single-mode (text) and multimodal data. Their strategies range from data selection and sequencing to advanced tactics like Adapter-based sequential tuning. Interestingly, their results suggest that integrating diverse data sources can match or even surpass the efficacy of exclusive multimodal data reliance.

Reference List:

[4] Chen X, Fang H, Lin T Y, et al. Microsoft coco captions: Data collection and evaluation server[J]. arXiv preprint arXiv:1504.00325, 2015. [5] Antol S, Agrawal A, Lu J, et al. Vqa: Visual question answering[C]//Proceedings of the IEEE international conference on computer vision. 2015: 2425–2433. [6] DAI W, LI J, LI D, et al. InstructBLIP: Towards General-purpose Vision-Language Models with Instruction Tuning[J]. [7] Liu H, Li C, Wu Q, et al. Visual instruction tuning[J]. arXiv preprint arXiv:2304.08485, 2023. [8] Zhu D, Chen J, Shen X, et al. Minigpt-4: Enhancing vision-language understanding with advanced large language models[J]. arXiv preprint arXiv:2304.10592, 2023. [9] Zhao Z, Guo L, Yue T, et al. Chatbridge: Bridging modalities with large language model as a language catalyst[J]. arXiv preprint arXiv:2305.16103, 2023. [10] Yang R, Song L, Li Y, et al. Gpt4tools: Teaching large language model to use tools via self-instruction[J]. arXiv preprint arXiv:2305.18752, 2023. [11] Pi R, Gao J, Diao S, et al. DetGPT: Detect What You Need via Reasoning[J]. arXiv preprint arXiv:2305.14167, 2023. [12] Luo G, Zhou Y, Ren T, et al. Cheap and quick: Efficient vision-language instruction tuning for large language models[J]. arXiv preprint arXiv:2305.15023, 2023. [13] Xu Z, Shen Y, Huang L. Multiinstruct: Improving multi-modal zero-shot learning via instruction tuning[J]. arXiv preprint arXiv:2212.10773, 2022.This article is part of a series of How To Train Multimodal LLMs To Understand And Interact With Text, Image, Video And Audio. Stay tuned for more information.

In Plain English 🚀

Thank you for being a part of the In Plain English community! Before you go:

- Be sure to clap and follow the writer ️👏️️

- Follow us: X | LinkedIn | YouTube | Discord | Newsletter

- Visit our other platforms: CoFeed | Differ

- More content at PlainEnglish.io

Overview of Multimodal LLMs — Algorithm, Dataset And Evaluation: Dataset Construction was originally published in Artificial Intelligence in Plain English on Medium, where people are continuing the conversation by highlighting and responding to this story.

* This article was originally published here